Хэрэглэгч:Timur/Ноорог/Тоон анализ

Тоон анализ нь тасралтгүй математикийн бодлогуудад зориулсан алгоритмуудыг судалдаг математикийн салбар юм (үүгээрээ дискрет математикаас ялгаатай).

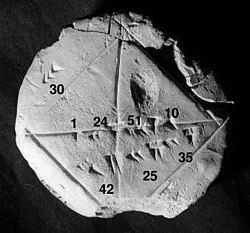

The earliest mathematical writing is perhaps the famous Babylonian tablet Plimpton 322, dating from approximately 1800 BC. On it one can read a list of pythagorean triples: triples of numbers, like (3,4,5), which are the lengths of the sides of a right-angle triangle. The Babylonian tablet YBC 7289 gives a sexagesimal approximation of , [1] which is the length of the diagonal of a square whose side measures one unit of length. Being able to compute the sides of a triangle (and hence, being able to compute square roots) is extremely important, for instance, in carpentry and construction[2]. In a square wall section that is two meters by two meters, a diagonal beam has to be meters long.[3]

Numerical analysis continues this long tradition of practical mathematical calculations. Much like the Babylonian approximation to , modern numerical analysis does not seek exact answers, because exact answers are impossible to obtain in practice. Instead, much of numerical analysis is concerned with obtaining approximate solutions while maintaining reasonable bounds on errors.

Numerical analysis naturally finds applications in all fields of engineering and the physical sciences, but in the 21st century, the life sciences and even the arts have adopted elements of scientific computations. Ordinary differential equations appear in the movement of heavenly bodies (planets, stars and galaxies); optimization occurs in portfolio management; numerical linear algebra is essential to quantitative psychology; stochastic differential equations and Markov chains are essential in simulating living cells for medicine and biology.

Before the advent of modern computers numerical methods often depended on hand interpolation in large printed tables. Nowadays (after mid 20th century) these tables have fallen into disuse, because computers can calculate the required functions. The interpolation algorithms nevertheless may be used as part of the software for solving differential equations and the like.

Ерөнхий удиртгал

[засварлах | кодоор засварлах]We will now outline several important themes of numerical analysis. The overall goal is the design and analysis of techniques to give approximate solutions to hard problems. To fix ideas, the reader might consider the following problems and methods:

- If a company wants to put a toothpaste commercial on television, it might produce five commercials and then choose the best one by testing each one on a focus group. This would be an example of a Monte Carlo optimization method.

- To send a rocket to the moon, rocket scientists will need a rocket simulator. This simulator will essentially be an integrator for an ordinary differential equation.

- Car companies can improve the crash safety of their vehicles by using computer simulations of car crashes. These simulations are essentially solving partial differential equations numerically.

- Hedge funds (private investment funds) use tools from all fields of numerical analysis to calculate the value of stocks and derivatives more precisely than other market participants.

- Airlines use sophisticated optimization algorithms to decide ticket prices, airplane and crew assignments and fuel needs. This field is also called operations research.

- Insurance companies use numerical programs for actuarial analysis.

Түүх

[засварлах | кодоор засварлах]The field of numerical analysis predates the invention of modern computers by many centuries. Linear interpolation was already in use more than 2000 years ago. Many great mathematicians of the past were preoccupied by numerical analysis, as is obvious from the names of important algorithms like Newton's method, Lagrange interpolation polynomial, Gaussian elimination, or Euler's method.

To facilitate computations by hand, large books were produced with formulas and tables of data such as interpolation points and function coefficients. Using these tables, often calculated out to 16 decimal places or more for some functions, one could look up values to plug into the formulas given and achieve very good numerical estimates of some functions. The canonical work in the field is the NIST publication edited by Abramowitz and Stegun, a 1000-plus page book of a very large number of commonly used formulas and functions and their values at many points. The function values are no longer very useful when a computer is available, but the large listing of formulas can still be very handy.

The mechanical calculator was also developed as a tool for hand computation. These calculators evolved into electronic computers in the 1940s, and it was then found that these computers were also useful for administrative purposes. But the invention of the computer also influenced the field of numerical analysis, since now longer and more complicated calculations could be done.

Шууд болон дараалан дөхөх аргууд

[засварлах | кодоор засварлах]|

Direct vs iterative methods Consider the problem of solving

for the unknown quantity x.

For the iterative method, apply the bisection method to f(x)=3x3+4. The initial values are a=0, b=3, f(a)=4, f(b)=85.

We conclude from this table that the solution is between 1.875 and 2.0625. The algorithm might return any number in that range with an error less than 0.2. Discretization and numerical integration In a two hour race, we have measured the speed of the car at three instants and recorded them in the following table. Time 0:20 1:00 1:40 Km/h 140 150 180 A discretization would be to say that the speed of the car was constant from 0:00 to 0:40, then from 0:40 to 1:20 and finally from 1:20 to 2:00. For instance, the total distance traveled in the first 40 minutes is approximately (2/3h x 140Km/h)=93.3Km. This would allow us to estimate the total distance traveled as 93.3Km + 100Km + 120Km = 313.3Km, which is an example of numerical integration (see below) using a Riemann sum, because displacement is the integral of velocity. Ill posed problem: Take the function f(x)=1/(x-1). Note that f(1.1)=10 and f(1.001)=1000: a change in x of less than 0.1 turns into a change in f(x) of nearly 1000. Evaluating f(x) near x=1 is an ill-conditioned problem. Well-posed problem: By contrast, the function is continuous and so evaluating it is well-posed. |

Direct methods compute the solution to a problem in a finite number of steps. These methods would give the precise answer if they were performed in infinite precision arithmetic. Examples include Gaussian elimination, the QR factorization method for solving systems of linear equations, and the simplex method of linear programming. In practice, finite precision is used and the result is an approximation of the true solution (assuming stability).

In contrast to direct methods, iterative methods are not expected to terminate in a number of steps. Starting from an initial guess, iterative methods form successive approximations that converge to the exact solution only in the limit. A convergence criterion is specified in order to decide when a sufficiently accurate solution has (hopefully) been found. Even in infinite precision arithmetic these methods would not reach the solution in finitely many steps (in general). Examples include Newton's method, the bisection method, and Jacobi iteration. In computational matrix algebra, iterative methods are generally needed for large problems.

Iterative methods are more common than direct methods in numerical analysis. Some methods are direct in principle but are usually used as though they were not, e.g. GMRES and the conjugate gradient method. For these methods the number of steps needed to obtain the exact solution is so large that an approximation is accepted in the same manner as for an iterative method.

Дискретчлэл

[засварлах | кодоор засварлах]Furthermore, continuous problems must sometimes be replaced by a discrete problem whose solution is known to approximate that of the continuous problem; this process is called discretization. For example, the solution of a differential equation is a function. This function must be represented by a finite amount of data, for instance by its value at a finite number of points at its domain, even though this domain is a continuum.

Алдаа үүсэх болон тархах

[засварлах | кодоор засварлах]The study of errors forms an important part of numerical analysis. There are several ways in which error can be introduced in the solution of the problem.

Тоймлолын алдаа

[засварлах | кодоор засварлах]Round-off errors arise because it is impossible to represent all real numbers exactly on a finite-state machine (which is what all practical digital computers are).

On a pocket calculator, if one enters 0.0000000000001 (or the maximum number of zeros possible), then a +, and then 100000000000000 (again, the maximum number of zeros possible), one will obtain the number 100000000000000 again, and not 100000000000000.0000000000001. The calculator's answer is incorrect because of roundoff in the calculation.

Тайралтын ба дискретчлэлийн алдаа

[засварлах | кодоор засварлах]Truncation errors are committed when an iterative method is terminated and the approximate solution differs from the exact solution. Similarly, discretization induces a discretization error because the solution of the discrete problem does not coincide with the solution of the continuous problem. For instance, in the iteration in the sidebar to compute the solution of , after 10 or so iterations, we conclude that the root is roughly 1.99 (for example). We therefore have a truncation error of 0.01.

Once an error is generated, it will generally propagate through the calculation. For instance, we have already noted that the operation + on a calculator (or a computer) is inexact. It follows that a calculation of the type a+b+c+d+e is even more inexact.

Тоон тогтвортой байдал ба энгийн тавилт

[засварлах | кодоор засварлах]This leads to the notion of numerical stability: an algorithm is numerically stable if an error, once it is generated, does not grow too much during the calculation. This is only possible if the problem is well-conditioned, meaning that the solution changes by only a small amount if the problem data are changed by a small amount. Indeed, if a problem is ill-conditioned, then any error in the data will grow a lot.

However, an algorithm that solves a well-conditioned problem may or may not be numerically stable. An art of numerical analysis is to find a stable algorithm for solving a well-posed mathematical problem. For instance, computing the square root of 2 (which is roughly 1.41421) is a well-posed problem. Many algorithms solve this problem by starting with an initial approximation x1 to , for instance x1=1.4, and then computing improved guesses x2, x3, etc... One such method is the famous Babylonian method, which is given by xk+1=xk/2+1/xk. Another iteration, which we will call Method X, is given by xk+1=(xk2-2)2+xk.[4] We have calculated a few iterations of each scheme in table form below, with initial guesses x1=1.4 and x1=1.42.

| Babylonian | Babylonian | Method X | Method X |

|---|---|---|---|

| x1=1.4 | x1=1.42 | x1=1.4 | x1=1.42 |

| x2=1.4142857... | x2=1.41422535... | x2=1.4016 | x2=1.42026896 |

| x3=1.414213564... | x3=1.41421356242... | x3=1.4028614... | x3=1.42056... |

| ... | ... | ||

| x1000000=1.41421... | x28=7280.2284... |

Observe that the Babylonian method converges fast regardless of the initial guess, whereas Method X converges extremely slowly with initial guess 1.4 and diverges for initial guess 1.42. Hence, the Babylonian method is said to be numerically stable, while Method X is said to be numerically unstable.

Судалгааны талбарууд

[засварлах | кодоор засварлах]The field of numerical analysis is divided in different disciplines according to the problem that is to be solved.

Функцийн утгыг тооцоолох

[засварлах | кодоор засварлах]One of the simplest problems is the evaluation of a function at a given point. The most straightforward approach, of just plugging in the number in the formula is sometimes not very efficient. For polynomials, a better approach is using the Horner scheme, since it reduces the necessary number of multiplications and additions. Generally, it is important to estimate and control round-off errors arising from the use of floating point arithmetic.

|

Interpolation: We have observed the temperature to vary from 20 degrees Celsius at 1:00 to 14 degrees at 3:00. A linear interpolation of this data would conclude that it was 17 degrees at 2:00 and 18.5 degrees at 1:30pm. Extrapolation: If the gross domestic product of a country has been growing an average of 5% per year and was 100 billion dollars last year, we might extrapolate that it will be 105 billion dollars this year. Regression: In linear regression, given n points, we compute a line that passes as close as possible to those n points.  Optimization: Say you sell lemonade at a lemonade stand, and notice that at 1$, you can sell 197 glasses of lemonade per day, and that for each increase of $0.01, you will sell one less lemonade per day. If you could charge $1.485, you would maximize your profit, but due to the constraint of having to charge a whole cent amount, charging $1.49 per glass will yield the maximum income of $220.52 per day.  Differential equation: If you set up 100 fans to blow air from one end of the room to the other and then you drop a feather into the wind, what happens? The feather will follow the air currents, which may be very complex. One approximation is to measure the speed at which the air is blowing near the feather every second, and advance the simulated feather as if it were moving in a straight line at that same speed for one second, before measuring the wind speed again. This is called the Euler method for solving an ordinary differential equation. |

Интерполяци, экстраполяци ба регресс

[засварлах | кодоор засварлах]Interpolation solves the following problem: given the value of some unknown function at a number of points, what value does that function have at some other point between the given points? A very simple method is to use linear interpolation, which assumes that the unknown function is linear between every pair of successive points. This can be generalized to polynomial interpolation, which is sometimes more accurate but suffers from Runge's phenomenon. Other interpolation methods use localized functions like splines or wavelets.

Extrapolation is very similar to interpolation, except that now we want to find the value of the unknown function at a point which is outside the given points.

Regression is also similar, but it takes into account that the data is imprecise. Given some points, and a measurement of the value of some function at these points (with an error), we want to determine the unknown function. The least squares-method is one popular way to achieve this.

Тэгшитгэл болон тэгшитгэлүүдийн системийг бодох

[засварлах | кодоор засварлах]Another fundamental problem is computing the solution of some given equation. Two cases are commonly distinguished, depending on whether the equation is linear or not. For instance, the equation is linear while is not.

Much effort has been put in the development of methods for solving systems of linear equations. Standard direct methods i.e. methods that use some matrix decomposition are Gaussian elimination, LU decomposition, Cholesky decomposition for symmetric (or hermitian) and positive-definite matrix, and QR decomposition for non-square matrices. Iterative methods such as the Jacobi method, Gauss-Seidel method, successive over-relaxation and conjugate gradient method are usually preferred for large systems.

Root-finding algorithms are used to solve nonlinear equations (they are so named since a root of a function is an argument for which the function yields zero). If the function is differentiable and the derivative is known, then Newton's method is a popular choice. Linearization is another technique for solving nonlinear equations.

Хувийн утгын болон онцгой утгын бодлогуудыг бодох

[засварлах | кодоор засварлах]Several important problems can be phrased in terms of eigenvalue decompositions or singular value decompositions. For instance, the spectral image compression algorithm [5] is based on the singular value decomposition. The corresponding tool in statistics is called principal component analysis. One application is to automatically find the 100 top subjects of discussion on the web, and to then classify each web page according to which subject it belongs to.

Оптимизаци

[засварлах | кодоор засварлах]Optimization problems ask for the point at which a given function is maximized (or minimized). Often, the point also has to satisfy some constraints.

The field of optimization is further split in several subfields, depending on the form of the objective function and the constraint. For instance, linear programming deals with the case that both the objective function and the constraints are linear. A famous method in linear programming is the simplex method.

The method of Lagrange multipliers can be used to reduce optimization problems with constraints to unconstrained optimization problems.

Интеграл тооцоолох

[засварлах | кодоор засварлах]Numerical integration, in some instances also known as numerical quadrature, asks for the value of a definite integral. Popular methods use one of the Newton-Cotes formulas (like the midpoint rule or Simpson's rule) or Gaussian quadrature. These methods rely on a "divide and conquer" strategy, whereby an integral on a relatively large set is broken down into integrals on smaller sets. In higher dimensions, where these methods become prohibitively expensive in terms of computational effort, one may use Monte Carlo or quasi-Monte Carlo methods (see Monte Carlo integration), or, in modestly large dimensions, the method of sparse grids.

Дифференциал тэгшитгэлүүд

[засварлах | кодоор засварлах]Numerical analysis is also concerned with computing (in an approximate way) the solution of differential equations, both ordinary differential equations and partial differential equations.

Partial differential equations are solved by first discretizing the equation, bringing it into a finite-dimensional subspace. This can be done by a finite element method, a finite difference method, or (particularly in engineering) a finite volume method. The theoretical justification of these methods often involves theorems from functional analysis. This reduces the problem to the solution of an algebraic equation.

Програм хангамж

[засварлах | кодоор засварлах]Since the late twentieth century, most algorithms are implemented and run on a computer. The Netlib repository contains various collections of software routines for numerical problems, mostly in Fortran and C. Commercial products implementing many different numerical algorithms include the IMSL and NAG libraries; a free alternative is the GNU Scientific Library.

MATLAB is a popular commercial programming language for numerical scientific calculations, but there are commercial alternatives such as S-PLUS and IDL, as well as free and open source alternatives such as FreeMat, GNU Octave (similar to Matlab), IT++ (a C++ library), R (similar to S-PLUS) and certain variants of Python. Performance varies widely: while vector and matrix operations are usually fast, scalar loops vary in speed by more than an order of magnitude. [6]

Many computer algebra systems such as Mathematica or Maple (free software systems include SAGE, Maxima, Axiom, calc and Yacas), can also be used for numerical computations. However, their strength typically lies in symbolic computations. Also, any spreadsheet software can be used to solve simple problems relating to numerical analysis.

Мөн үзэх

[засварлах | кодоор засварлах]- Тооцон бодох шинжлэх ухаан

- Тоон шугаман алгебр

- Грам-Шмидт процесс

- Тоон интегралчлал

- Тоон дифференциалчлал

Тэмдэглэлүүд

[засварлах | кодоор засварлах]- ↑ The approximation of the square root of 2 is four sexagesimal figures, which is about six decimal figures. 1 + 24/60 + 51/602 + 10/603 = 1.41421296...

Photograph, illustration, and description of the root(2) tablet from the Yale Babylonian Collection - ↑ The New Zealand Qualification authority specifically mentions this skill in document 13004 version 2, dated 17 october 2003 titled CARPENTRY THEORY: Demonstrate knowledge of setting out a building

- ↑ By the Pythagorean theorem, a square whose side is 2 meters has a diagonal of length meters.

- ↑ This is a fixed point iteration for the equation , whose solutions include . The iterates always move to the right since . Hence converges and diverges.

- ↑ The Singular Value Decomposition and Its Applications in Image Compression

- ↑ Speed comparison of various number crunching packages

Ном хэвлэл

[засварлах | кодоор засварлах]- Gilat, Amos (2004). MATLAB: An Introduction with Applications (2nd edition ed.). John Wiley & Sons. ISBN 0-471-69420-7.

{{cite book}}:|edition=has extra text (help); Cite has empty unknown parameter:|coauthors=(help) - Hildebrand, F. B. (1974). Introduction to Numerical Analysis (2nd edition ed.). McGraw-Hill. ISBN 0-070-28761-9.

{{cite book}}:|edition=has extra text (help) - Leader, Jeffery J. (2004). Numerical Analysis and Scientific Computation. Addison Wesley. ISBN 0-201-73499-0.

- Trefethen, Lloyd N. (2006). "Numerical analysis", 20 pages. To appear in: Timothy Gowers and June Barrow-Green (editors), Princeton Companion of Mathematics, Princeton University Press.

Гадны линкүүд

[засварлах | кодоор засварлах]- Numerische Mathematik, volumes 1-66, Springer, 1959-1994 (searchable; pages are images).